Harmful content remains to progress quickly whether influenced by present events or by people looking for novel habits to shirk our systems and it’s decisive for AI systems to progress together with it. But it characteristically takes numerous months to gather and tag thousands, if not millions, of instances essential to train to each distinct AI system to advert a fresh type of content.

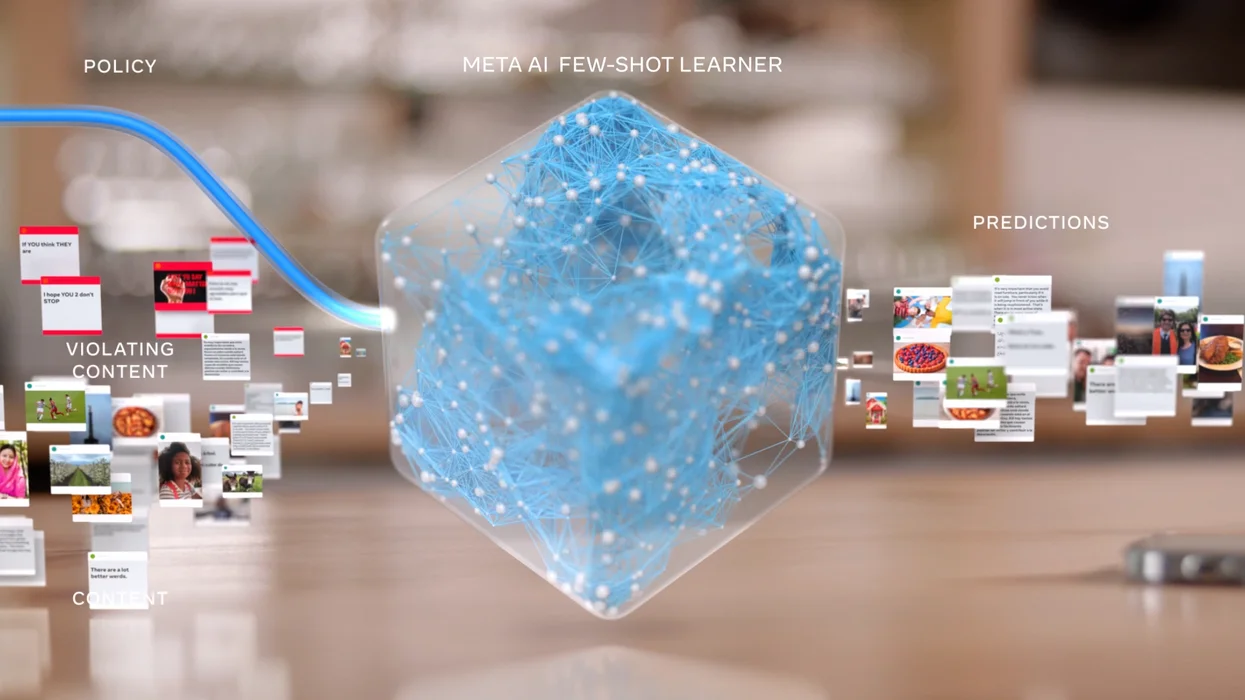

To confrontation this, Meta has built and freshly deployed Few-Shot Learner (FSL), an AI technology that can acclimatize to take action on fresh or developing types of destructive content within weeks instead of months. This fresh AI system uses a technique called “few-shot learning,” in which models start with an overall thoughtful of numerous dissimilar topics and then use much lesser or occasionally zero-labeled instances to acquire novel responsibilities. FSL can be used in more than 100 languages and picked up from different kinds of data, such as images and text. This novel technology will assist enhance our prevailing approaches of talking damaging content.

Meta’s fresh and innovative system works across three dissimilar circumstances, each of which needs fluctuating levels of labeled examples:

- Zero-shot: Policy descriptions with no examples

- Few-shot with a demonstration: Policy descriptions with a small set of examples (n<50)

- Low-shot with fine-tuning: ML developers can fine-tune the FSL base model with a low number of training examples

Meta tested FSL on new events

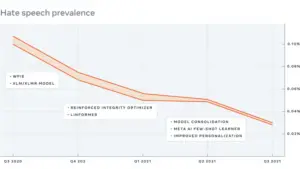

Meta has tested FSL on a few fresh events. For instance, one fresh task was to classify content that shares deceptive or exaggerated info disheartening COVID-19 vaccinations such as “Vaccine or DNA changer?”. In a distinct task, the innovative AI system enhanced an existing classifier that flags content that comes close to provocative violence for instance, “Does that guy need all of his teeth?”. The outdated tactic may have lost these types of provocative posts since there aren’t many labeled cases that use “DNA changer” to generate vaccine uncertainty or references to teeth to infer fierceness. Meta has also understood that in amalgamation with prevailing classifiers along with efforts to decrease destructive content, continuing developments in our technology and variations Meta made to decrease challenging content in News Feed, FSL has assisted decrease the occurrence of additional destructive content like hate speech.

Meta believes that FSL can, over time, improve the performance of all of our truthfulness AI systems by letting them influence a single, shared knowledge base and backbone to deal with many dissimilar kinds of desecrations. There’s a lot more work to be done, but these primary creation consequences are a significant milestone that signs a change toward more intellectual, widespread AI systems.

What’s your opinion on the new AI system of Meta? Do you think this will be helpful? Share your thoughts in the comment section and keep visiting our website for such amazing news content thanks.

Also Read: WhatsApp now allows for disappearing messages!